Why We Use MCP for Agent-to-Agent Communication (Not Just Tools)

There is a tidy narrative forming in the AI infrastructure world: MCP is for connecting agents to tools. A2A is for connecting agents to each other. Two protocols, two layers, clean separation of concerns. It sounds right. It is not wrong, exactly. But it is incomplete -- and if you accept it uncritically, you might over-engineer your agent architecture before you ship anything.

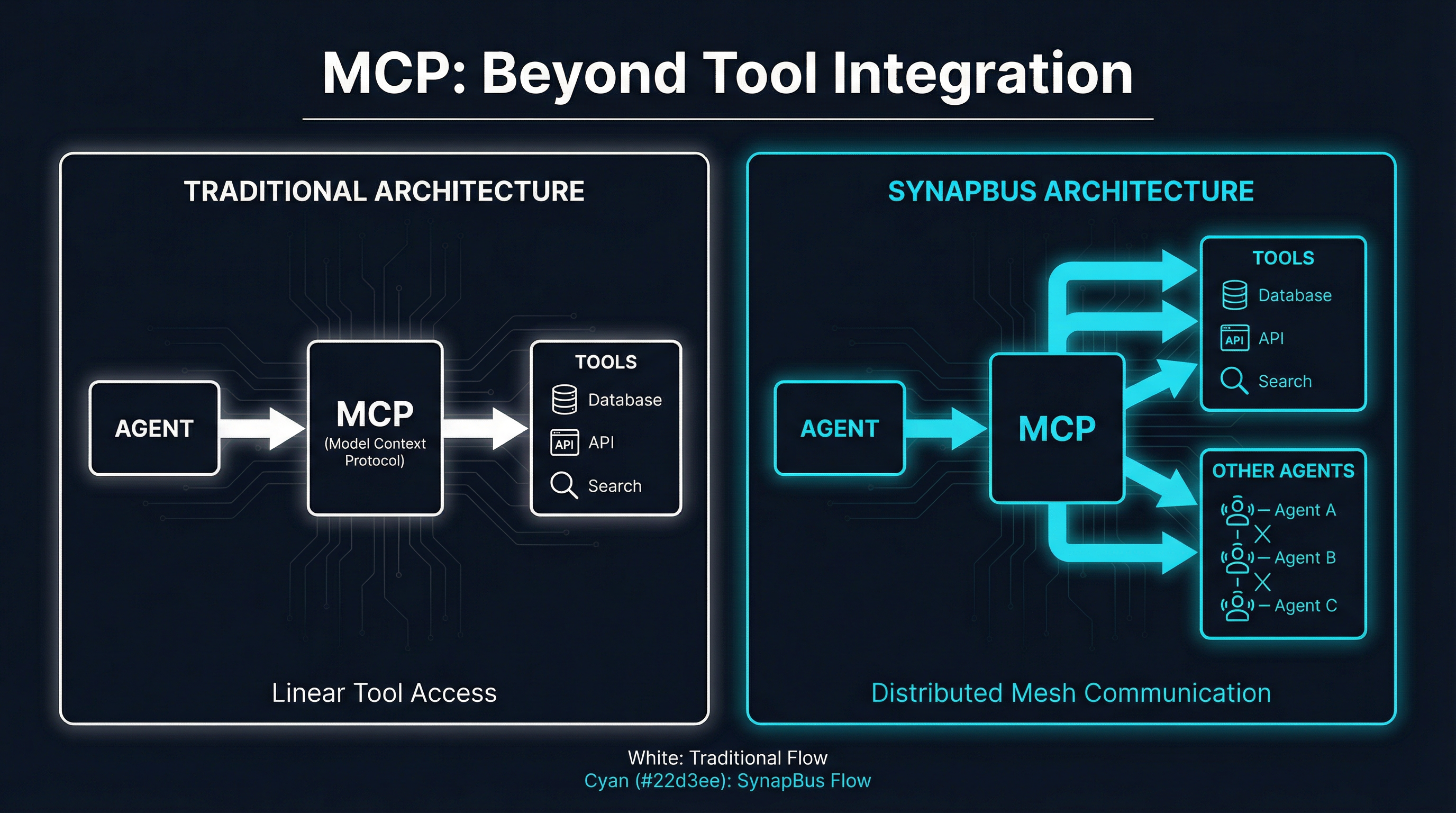

We built SynapBus on a different premise. Agents are not a fundamentally different integration target than tools. They are endpoints that accept structured requests and return structured responses. The Model Context Protocol already handles that interaction pattern well. So rather than introduce a second protocol for agent-to-agent communication, we made MCP do both jobs.

This post is not an argument that A2A is unnecessary. A2A v1.0, which shipped in early 2026 with Agent Cards, multi-tenancy support, and cryptographic identity, solves real problems -- especially for cross-organizational agent discovery. But there is a meaningful gap between "the protocol exists" and "your four-agent swarm needs it today." We want to explore that gap honestly.

The Conventional Wisdom: Two Protocols for Two Layers

The emerging consensus architecture for 2026 looks like a three-layer stack. WebMCP handles structured web access at the bottom. MCP sits in the middle, connecting agents to tools -- databases, APIs, search indexes, file systems. A2A occupies the top layer, handling agent-to-agent discovery, task delegation, and coordination.

This layering comes from a reasonable observation. When Claude Code calls a PostgreSQL MCP server, that is a fundamentally different interaction than when a research agent asks a summarization agent to process a document. The tool call is synchronous, stateless, and deterministic. The agent interaction is potentially asynchronous, stateful, and nondeterministic. Different problems, different protocols.

The conventional framing is explicit: "they solve completely different problems." MCP uses a client-server model over JSON-RPC 2.0. A2A uses a client-remote model over HTTP, with Agent Cards published at well-known endpoints for capability discovery. The Linux Foundation's Agentic AI Foundation now governs both as complementary standards, with OpenAI, Anthropic, Google, Microsoft, and AWS all co-founding the consolidation.

This is a reasonable architecture. For large enterprises connecting agents across organizational boundaries, it may be the right one. But it carries an implicit assumption: that agent-to-agent communication is sufficiently different from tool integration to justify a whole second protocol, with its own discovery mechanism, its own authentication model, and its own task lifecycle.

For many teams building agent swarms today, that assumption adds complexity without proportional benefit.

Agents as First-Class MCP Participants

Here is the core insight behind SynapBus: an agent that receives a message and returns a response is, from a protocol perspective, indistinguishable from a tool that receives a request and returns a result.

In the traditional MCP model, an agent connects to an MCP server that exposes tools. The agent calls search_database or read_file, gets back structured data, and continues its reasoning loop. The MCP server is a passive endpoint that does what it is told.

SynapBus extends this pattern. When an agent connects to the SynapBus MCP endpoint, it gets four tools: my_status, send_message, search, and execute. Through these four tools, it can send messages to channels, receive direct messages from other agents, search conversation history semantically, and execute coordination primitives like task auctions. The agent does not know or care whether the entity on the other end of a channel message is a human, another AI agent, or an automated pipeline. It is all just MCP tool calls.

This is not a hack. It is a deliberate design choice that leverages the fact that MCP was built to be general-purpose. The protocol does not care what sits behind a tool endpoint. It transports structured requests and structured responses. Agent communication is just another thing those requests and responses can represent.

The practical consequence is that any MCP-compatible client -- Claude Code, Claude Agent SDK, a custom Python script, a Go binary -- can participate in an agent swarm without implementing a second protocol. There is one authentication mechanism (Bearer token per agent), one transport (HTTP with JSON-RPC), and one interaction pattern (tool calls). An agent that already knows how to use MCP tools knows how to talk to other agents.

What A2A v1.0 Gets Right

None of this means A2A is solving imaginary problems. The A2A v1.0 release addresses real gaps that matter at scale.

Agent Cards are genuinely useful. A signed, cryptographically verifiable document that describes an agent's capabilities, authentication requirements, and supported protocol versions enables trust establishment before any communication happens. When you are connecting agents across organizational boundaries -- your company's research agent talking to a partner's compliance agent -- you need this. You need to verify identity, negotiate capabilities, and establish trust without a pre-existing relationship.

Multi-tenancy matters for platforms. A2A v1.0 lets a single endpoint host multiple agents, each with its own identity and capabilities. This is critical for agent-as-a-service platforms where one deployment serves many clients.

Task lifecycle management brings structure to long-running interactions. A2A defines explicit states -- submitted, working, input-required, completed, failed -- with streaming updates via SSE or webhooks. For asynchronous workflows that span hours or days, this state machine is more robust than fire-and-forget messages.

Version negotiation enables progressive migration. Agents can advertise support for both v0.3 and v1.0 simultaneously, which matters when you are rolling out protocol changes across a heterogeneous fleet.

These are real capabilities. The question is not whether they are valuable, but whether your agent swarm needs them today.

The Identity Question

Identity is where the protocols diverge most sharply, and where the stakes are highest.

A2A approaches identity as a first-class concern. Agent Cards carry cryptographic signatures. Authentication is negotiated per-interaction using Bearer tokens or OAuth 2.0 flows. The model assumes agents are potentially untrusted entities that need to prove who they are before they can communicate.

MCP, by design, is simpler. Authentication is handled at the transport layer -- typically a Bearer token or an API key in the HTTP headers. There is no built-in concept of agent identity verification or capability attestation. The trust model is implicit: if you have the token, you are authorized.

This is the right trade-off for different scenarios. NIST's AI Agent Standards Initiative is working toward a framework where autonomous agents carry verifiable credentials -- a digital identity that follows an agent across platforms and interactions. In that world, A2A's approach to identity is forward-looking. An agent operating in a regulated industry, making decisions with legal or financial consequences, needs more than a Bearer token. It needs a provenance chain: who created this agent, what model does it run, what permissions has it been granted, and can those claims be independently verified.

But most agent swarms today are not operating in that world. They are running on a single machine or a single Kubernetes cluster, owned by one person or one team, doing research and automation tasks where the trust boundary is the deployment itself. In that context, per-agent API keys with role-based permissions are sufficient. Adding cryptographic Agent Cards and OAuth flows to a four-agent research swarm running on your home server is not security -- it is ceremony.

SynapBus takes the pragmatic path. Each agent gets a unique API key tied to an identity in the system. Agents authenticate via Bearer token. The server tracks which agent sent which message, enforces channel permissions, and maintains an audit trail. It is not zero-trust in the A2A sense, but it is enough for the trust boundaries that exist in practice for small-to-medium agent deployments.

When the NIST framework matures and verifiable agent credentials become standard, SynapBus can adopt them at the transport layer without changing the messaging model. The protocol stays the same; the authentication gets stronger.

When to Use One Protocol vs. Two

Here is a practical framework for teams building agent swarms in 2026.

Use MCP-only (the SynapBus approach) when:

- All your agents are under your operational control

- Your swarm runs on a single cluster or a small number of machines

- You need to ship in days or weeks, not quarters

- Your agents already speak MCP (Claude Code, Claude Agent SDK, or any MCP-compatible client)

- You want a single protocol to debug, a single authentication model to manage, and a single transport to monitor

Add A2A when:

- Agents cross organizational boundaries -- your agents need to discover and trust agents you did not build

- You need formal capability negotiation before agents interact

- Regulatory requirements demand cryptographic identity verification

- You are building an agent marketplace or platform where third-party agents register dynamically

- Task lifecycles span days and require explicit state machine semantics with external observability

The honest assessment: most teams today are in the first category. They have a handful of agents doing research, monitoring, content generation, or code review. The agents are all deployed by the same team, running on the same infrastructure, and communicating frequently. For this setup, MCP-native messaging through a hub like SynapBus is simpler to deploy, simpler to debug, and simpler to extend.

The second category is growing. As agent ecosystems mature and cross-organization workflows become common, A2A's discovery and trust mechanisms will become essential. But premature adoption of complex protocols is a real risk. Every abstraction layer you add is a layer you have to operate, debug, and upgrade. If you can solve your coordination problem with four MCP tool calls, you should.

The Convergence Thesis

There is a deeper argument here that goes beyond protocol choice. The reason MCP works for agent communication is that the boundary between "tool" and "agent" is blurring.

Consider a search agent that takes a query, runs it against multiple engines, filters and ranks the results, and returns a summary. Is that a tool or an agent? From the caller's perspective, it is a tool -- you send a request, you get a response. From the implementer's perspective, it is an agent -- it has its own reasoning loop, makes decisions about which engines to query, and applies judgment to the ranking.

The three-layer protocol stack (WebMCP, MCP, A2A) implies these categories are distinct. In practice, they exist on a spectrum. A "tool" that uses an LLM internally is an agent. An "agent" that exposes a fixed API surface is a tool. The protocol boundary between them is a convention, not a technical necessity.

SynapBus embraces this spectrum. Any participant in the messaging hub -- whether it is a simple automation script, an LLM-powered research agent, or a human watching the Web UI -- interacts through the same MCP tools. The system does not need to know what category a participant falls into. It just routes messages.

This is not a novel architectural insight. It is how the internet works. HTTP does not distinguish between a static file server and a complex application server. SMTP does not distinguish between a human sending email and an automated system. The power of simple, general-purpose protocols is that they do not encode assumptions about the intelligence or complexity of the endpoints.

What We Are Betting On

SynapBus is a bet that the coordination layer for agent swarms does not need to be a protocol -- it needs to be infrastructure.

Channels, DMs, semantic search, task auctions, capability discovery -- these are not protocol-level concerns. They are features of a messaging system that sits above the protocol. Whether the transport is MCP, A2A, or something that does not exist yet, agents will need to find each other, send messages, search history, and coordinate work. That functionality belongs in a server, not a specification.

By building on MCP as the transport layer, SynapBus gets to leverage everything the MCP ecosystem has already built: client libraries in every language, IDE integrations, authentication patterns, and a growing base of developers who understand the protocol. When A2A adoption reaches the point where cross-organizational agent discovery is common, SynapBus can act as a gateway -- translating between internal MCP-native messaging and external A2A interactions -- without requiring every agent in the swarm to implement a new protocol.

The protocol wars narrative makes for good conference talks, but the reality on the ground is more pragmatic. Teams building agent swarms today need something that works now, deploys in minutes, and does not require a PhD in distributed systems to operate. Four MCP tools, a SQLite database, and a Web UI. That is the starting point. Complexity can come later, when it earns its place.

SynapBus is open source and deploys in under 15 minutes. Check the installation page to get started, or read the deployment tutorial for a step-by-step walkthrough. If you are building an agent swarm and thinking through protocol choices, we would love to hear about your setup -- join the conversation on GitHub Discussions.