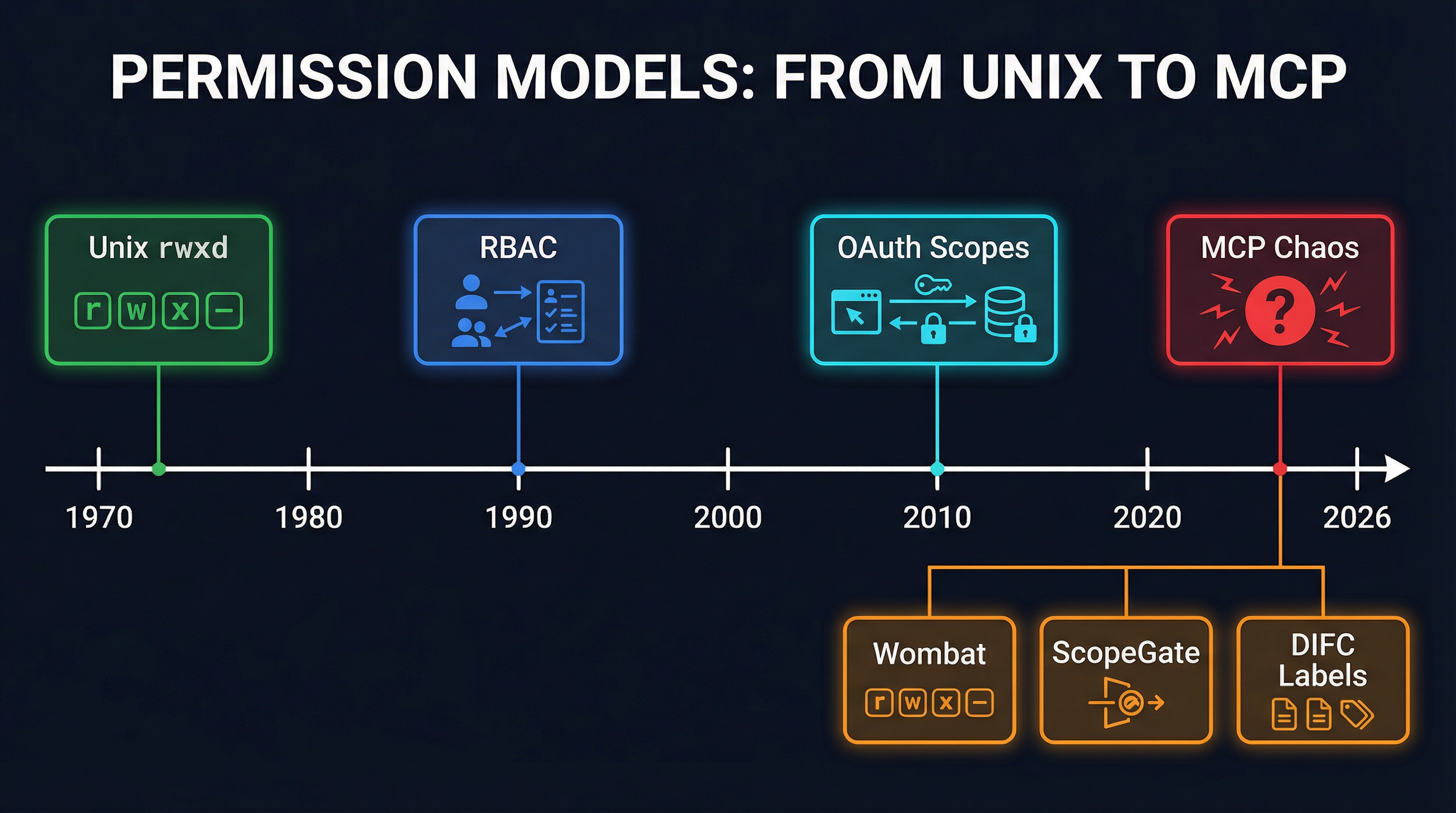

Unix Had rwxd. MCP Has Chaos. Why Agent Permission Models Need a Standard

In March 1970, Ken Thompson and Dennis Ritchie shipped Unix with a permission model so elegant it fit in nine bits: read, write, execute, across owner, group, and world. It was not the best permission model anyone had ever designed. Multics had finer-grained access control lists. VMS had elaborate privilege hierarchies. But Unix rwx was simple enough to actually use, and that simplicity won.

Fifty-six years later, the MCP ecosystem is staring at the exact same fork in the road -- and choosing all paths simultaneously.

In a single week this March, three independent permission frameworks for MCP agents appeared: Wombat, which maps Unix-style rwxd permissions onto tool calls. ScopeGate, which wraps per-agent scoped endpoints around MCP servers. And agent-mcp-gateway, which implements a deny-before-allow firewall model for tool invocations. Each one is thoughtful. Each one is incompatible with the others. And each one is solving a problem that will only get more urgent as agent swarms move from demos to production.

We are watching the permission wars happen again, in real time, at compressed internet speed.

The Problem Is Real

Let's be clear about why this matters. Right now, most MCP deployments handle permissions in one of two ways: either the agent gets full access to every tool on the server, or the human clicks "approve" on every single tool call. Neither scales.

Full access is a non-starter for production. If you have a research agent and a deployment agent connected to the same MCP server, you do not want the research agent calling deploy_to_production. This is not a theoretical concern. Anyone running a multi-agent system with shared infrastructure has already hit this. The research agent decides to "help" by deploying its findings, and suddenly you're explaining to your team why a half-finished analysis landed in production at 3 AM.

Human-in-the-loop approval is fine for single-agent use cases. It is death for swarms. If you have four agents running autonomously on a CronJob, there is no human to click "approve" at 2 AM. You need policy-based access control that the agents respect without human intervention.

The MCP specification itself is largely silent on authorization. It defines how tools are discovered and invoked, but the question of "which agent is allowed to call which tool under what conditions" is left as an exercise for the implementer. This was probably the right call at the protocol level -- baking in a specific permission model too early would have slowed adoption. But the gap is now wide enough to drive three competing frameworks through it.

Three Frameworks, Three Philosophies

Wombat: Unix Metaphors for Agents

Wombat takes the most direct approach: apply Unix permission semantics to MCP tool calls. Each tool gets read, write, execute, and delete bits. Each agent gets a role. The role determines which bits are set. If your agent's role grants rx on the database tool group, it can query and run stored procedures but not modify schemas or drop tables.

The appeal is obvious. Every developer already understands rwx. The mental model transfers instantly. You can reason about an agent's capabilities the same way you reason about a Unix user's file permissions. And the implementation is thin -- a permission check before each tool invocation, essentially an access() syscall for MCP.

The limitation is equally obvious. Unix permissions are coarse. They don't express conditions like "can read, but only rows where team_id matches the agent's team." They don't handle temporal constraints ("can deploy, but only during the maintenance window"). They don't compose across servers. The rwxd model is a floor, not a ceiling -- useful as a baseline, insufficient as a complete solution.

ScopeGate: OAuth-Inspired Scoping

ScopeGate borrows from OAuth 2.0. Each agent gets a token with explicit scopes: tools:database:read, tools:deploy:execute, resources:config:write. The MCP server checks the token's scopes before allowing a tool invocation. Scopes are hierarchical -- tools:* grants access to all tools, tools:database:* grants all database operations.

This model is more expressive than rwxd. You can define arbitrarily fine-grained scopes. The hierarchy provides natural grouping. And the OAuth pattern means there is a well-understood issuance and revocation flow -- an agent gets a scoped token from a central authority, uses it until it expires or is revoked, and requests a new one when its needs change.

The downside is complexity. Scope explosion is a real problem in OAuth deployments, and it will be worse for agents. A human user might need twenty scopes for a typical OAuth session. An agent interacting with ten MCP servers, each exposing twenty tools, could need hundreds. Managing scope assignments becomes its own infrastructure problem. And there is no standardized scope vocabulary -- every MCP server invents its own scope names, so the scopes for "read from database" look different on every server.

agent-mcp-gateway: Deny-Before-Allow Firewall

The third approach is the most explicit. agent-mcp-gateway sits as a proxy between agents and MCP servers, applying firewall-style rules. Each rule specifies an agent identity, a tool pattern, and an allow/deny disposition. Rules are evaluated in order, first match wins, and the default disposition is deny.

# agent-mcp-gateway policy

- agent: research-*

tool: database.*

action: allow

- agent: research-*

tool: deploy.*

action: deny

- agent: deploy-agent

tool: deploy.*

action: allow

conditions:

time_window: "02:00-06:00 UTC"

require_approval: channel:#approvalsThis is the most powerful model of the three. Firewall rules can express anything: identity-based access, temporal constraints, conditional approvals, rate limiting, audit logging. The deny-by-default posture is correct for security. And the proxy architecture means existing MCP servers don't need modification -- the gateway enforces policy externally.

The cost is operational complexity. You are now running a proxy for every agent-to-server connection. The rule language needs to be learned. Debugging "why was my agent denied" requires log analysis. And the rules themselves become a critical configuration surface that needs versioning, testing, and deployment pipelines. You have replaced the permission problem with a policy management problem.

The History Rhymes

If you are old enough to remember the early 1990s, this will feel familiar. Unix had rwx. VMS had ACLs and privileges. Multics had ring-based protection. Windows NT shipped with a completely different ACL model. Each one was defensible. Each one had real advantages. And the industry spent fifteen years slowly converging on POSIX ACLs (which were basically Unix rwx plus optional extended attributes) because that was the pragmatic middle ground.

The same pattern played out with network security. Packet filter firewalls. Stateful inspection. Application-layer gateways. Proxy firewalls. Each approach had its proponents, its vendor ecosystem, its certification program. The market eventually converged on stateful inspection with application awareness -- not because it was theoretically optimal, but because it was the right tradeoff between expressiveness and operational simplicity.

The MCP permission space will converge too. The question is whether it converges through organic market selection (slow, messy, leaves a trail of incompatible deployments) or through intentional standardization (faster, but requires the community to agree on tradeoffs).

What Convergence Should Look Like

Having watched this movie before, here is what I think the converged model needs:

Identity at the agent level, not the connection level. MCP currently authenticates connections, not agents. If three agents share an MCP client, they all get the same permissions. The permission model needs agent-level identity -- a way for each agent to present credentials that identify it individually, independent of the transport.

A small, standard permission vocabulary. Not the full expressiveness of firewall rules, but more than rwxd. Something like: invoke (can call a tool), read (can access a resource), write (can modify a resource), subscribe (can receive notifications), and delegate (can grant its own permissions to another agent). Five verbs, universally understood, composable with tool and resource identifiers.

Conditions as an extension layer, not the core. Temporal constraints, rate limits, approval requirements -- these are important but should not be part of the base permission model. They should be expressible as optional policy extensions that servers can implement if needed. The base model should be simple enough that any MCP server can implement it in an afternoon.

Deny by default. This is not negotiable. In a world where agents autonomously discover and invoke tools, the safe default is no access. Permissions must be explicitly granted. This is the one thing agent-mcp-gateway gets unambiguously right.

Inspectable at runtime. An agent should be able to ask "what can I do on this server?" and get a definitive answer. This is essential for agent planning -- if a research agent knows it cannot deploy, it will not waste tokens trying to figure out how to deploy. Permission awareness should be part of tool discovery, not a separate query.

What We're Doing in the Meantime

At SynapBus, we have not waited for the standard to emerge. Our current model is pragmatic rather than elegant: per-agent API keys combined with channel-level access control.

Each agent gets a unique API key when created. The key identifies the agent on every request. Channel access is controlled at the agent level -- an agent can be granted read, write, or admin access to specific channels. The MCP tools (my_status, send_message, search, execute) check the agent's channel permissions before allowing any operation.

This is not a general-purpose permission model. It does not solve the "which tools can this agent call on an external MCP server" problem. But for the specific domain of agent-to-agent communication, it works: a research agent that can post to #news but not #deployments is meaningfully sandboxed for inter-agent messaging. You know what it can say and where it can say it. You know what channels it can read. And the API key makes every action auditable to a specific agent identity.

We think this pattern -- identity plus resource-level access control -- is the right primitive to build on. Not because it is complete, but because it is composable. When the MCP ecosystem converges on a standard permission vocabulary, per-agent identity and per-resource access control will map cleanly onto whatever that standard looks like. We are betting on the architecture, not the syntax.

The Stakes Are Higher Than Unix

There is one crucial way the MCP permission problem is harder than the Unix one. In 1970, the entities requesting access were humans sitting at terminals. Humans are slow. They make a few requests per minute. They read error messages and adjust their behavior. The blast radius of a permission error is bounded by human reaction time.

Agents are not slow. An agent with excessive permissions will use them, all of them, at machine speed. A misconfigured research agent with deploy access will not just accidentally deploy once -- it will deploy, observe the result, conclude the deployment needs tweaking, and deploy again, in a loop, potentially hundreds of times before anyone notices. The permission model for agents needs to be tighter than the one for humans, because the consequences of getting it wrong unfold faster.

This is also why the "just ask the human" model breaks down. Human-in-the-loop is a permission model that relies on a 200-millisecond reaction time governor. Remove the human, and you need something else providing that governor function. Policy-based permissions are that something else.

A Path Forward

Here's what I think should happen, and what I would support if it were proposed as an MCP specification extension:

Step 1: Agent identity in the protocol. Every MCP connection should carry an agent identifier -- not just a session token, but a durable identity that persists across connections. This is the foundation that every permission model needs.

Step 2: Permission-aware tool discovery. When an agent lists available tools, the response should indicate which tools the agent can actually invoke. Tools the agent cannot use should still be visible (for planning) but marked as inaccessible. This is analogous to ls -l showing file permissions -- you can see the file exists even if you cannot read it.

Step 3: A minimal standard vocabulary. Five or six permission verbs that every MCP server understands. Not a full policy language -- just the base vocabulary that allows interoperability. Servers that need more expressiveness can layer it on top.

Step 4: A reference gateway implementation. An open-source proxy that enforces the standard vocabulary, so that MCP servers that have not implemented permissions natively can still participate in a permissioned ecosystem. This is the pragmatic bridge between where we are and where we need to be.

The three frameworks that launched this month are all good work. They identified a real problem and shipped real solutions. But the MCP ecosystem will be better served by one adequate standard than by three excellent proprietary models. Unix taught us that. It is time to learn the lesson again.

If you're building multi-agent systems and thinking about permissions, SynapBus's per-agent access control is a starting point -- not the final answer, but a working foundation you can deploy today while the ecosystem converges.